🐻 Deep Learning Image Recognition: 3 Projects in One

I have been working tirelessly on perfecting my skill at image classification, thanks to my new fascination with fast.ai and the work of Jeremy Howard and his team. Today, I made three...almost four...web apps. I got to know HuggingFace.com quite well, and I honestly have had a fantastic time on these projects. But I feel like I always deeply enjoy this work. Well, I guess that is closer to ALMOST always (it depends on how my accuracy numbers are looking and how many bugs are crawling around). But I digress. The projects!

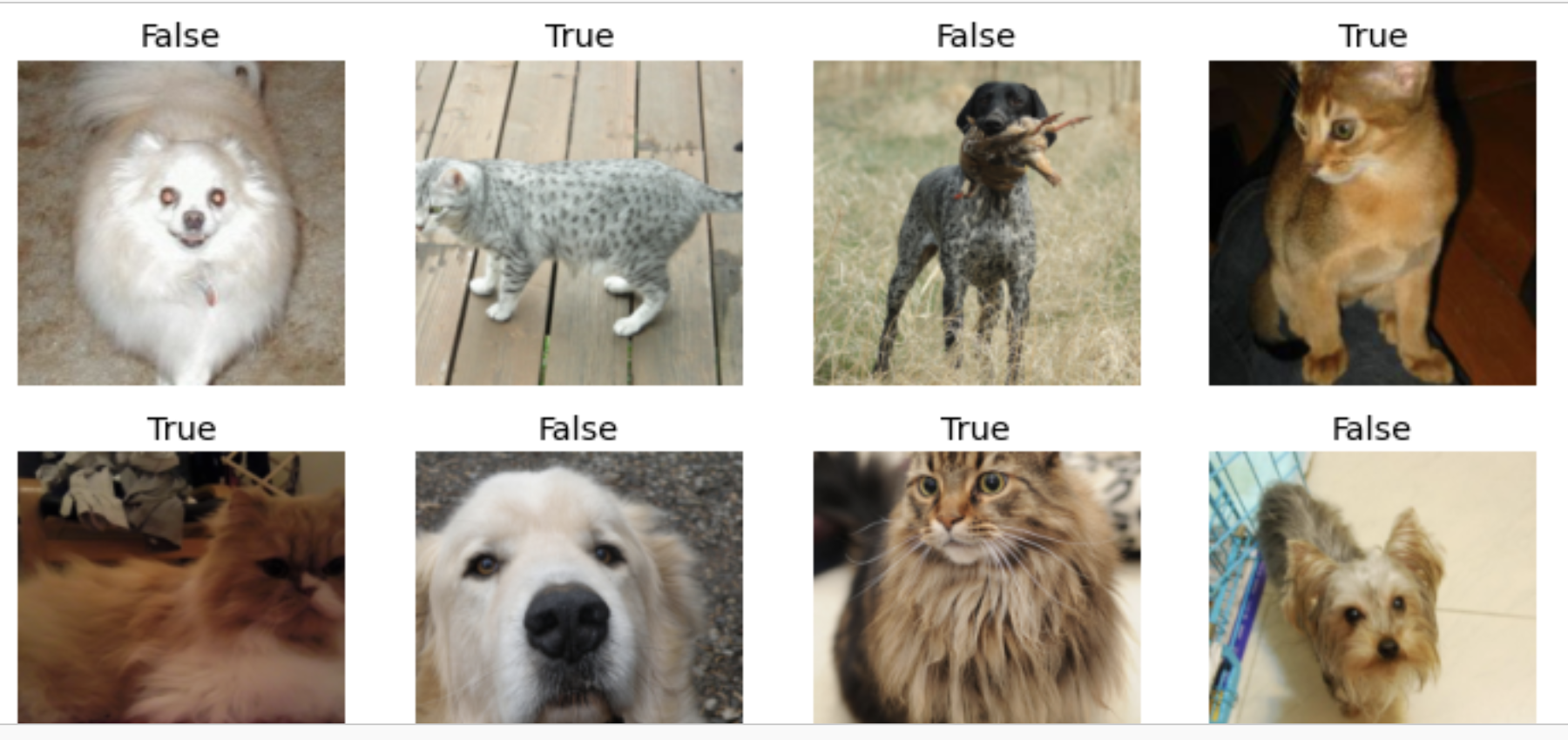

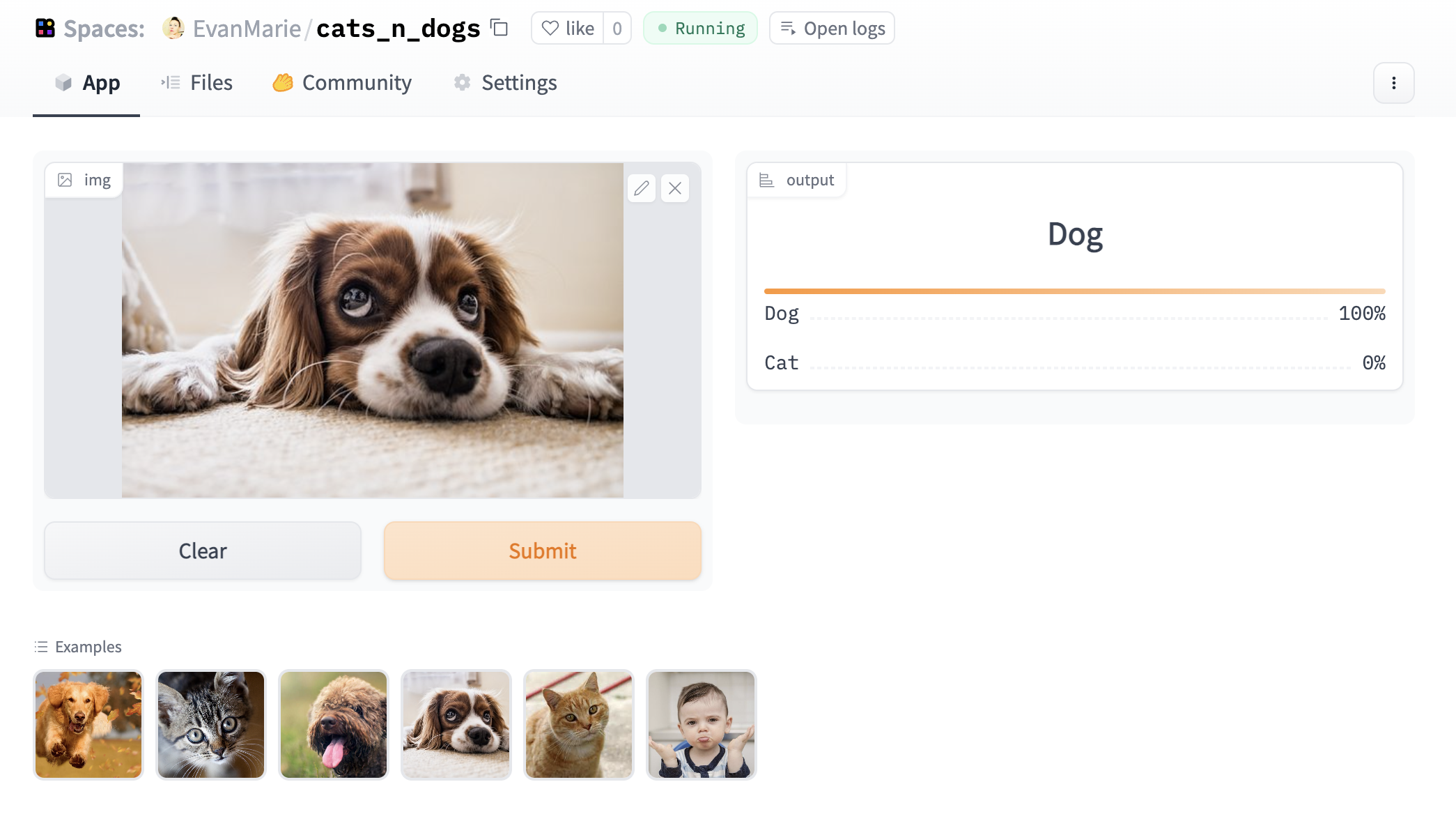

The first, properly named "Cat or Dog!?", can differentiate with an accuracy of close to 100% between a dog and a cat when given an image. For this project, I used a dataset from the fast.ai library. This was clearly the most simple of the three, but I was nevertheless impressed with how streamlined the process is when using the fast.ai framework. It quite literally becomes an experience like playing a game. It is fun and rewarding, and at the same time, I am learning state-of-the-art deep learning skills. I cannot imagine anything more ideal!

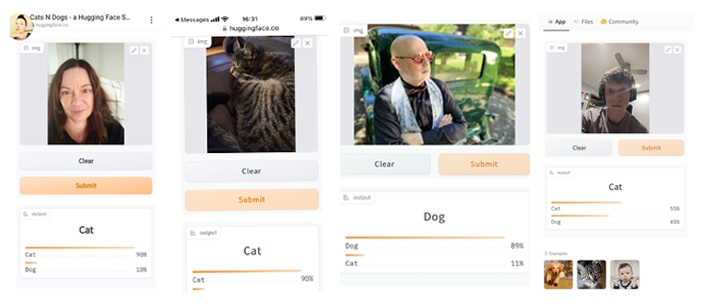

So, can you imagine the pics I got messaged to me when I first sent this out to my friends? ONE person actually input a picture of his cat. EVERYONE else was obsessed with inputting either themselves or various other people or things to see what the model came up with in comparison. My children spent an hour and a half inputing their favorite anime characters! And I was honestly surprised at some of the results. I always thought my ex was a cat. But he was 90% dog! Here are some of the texts I got:

Cat or Dog?! the full code: PDF | Jupyter

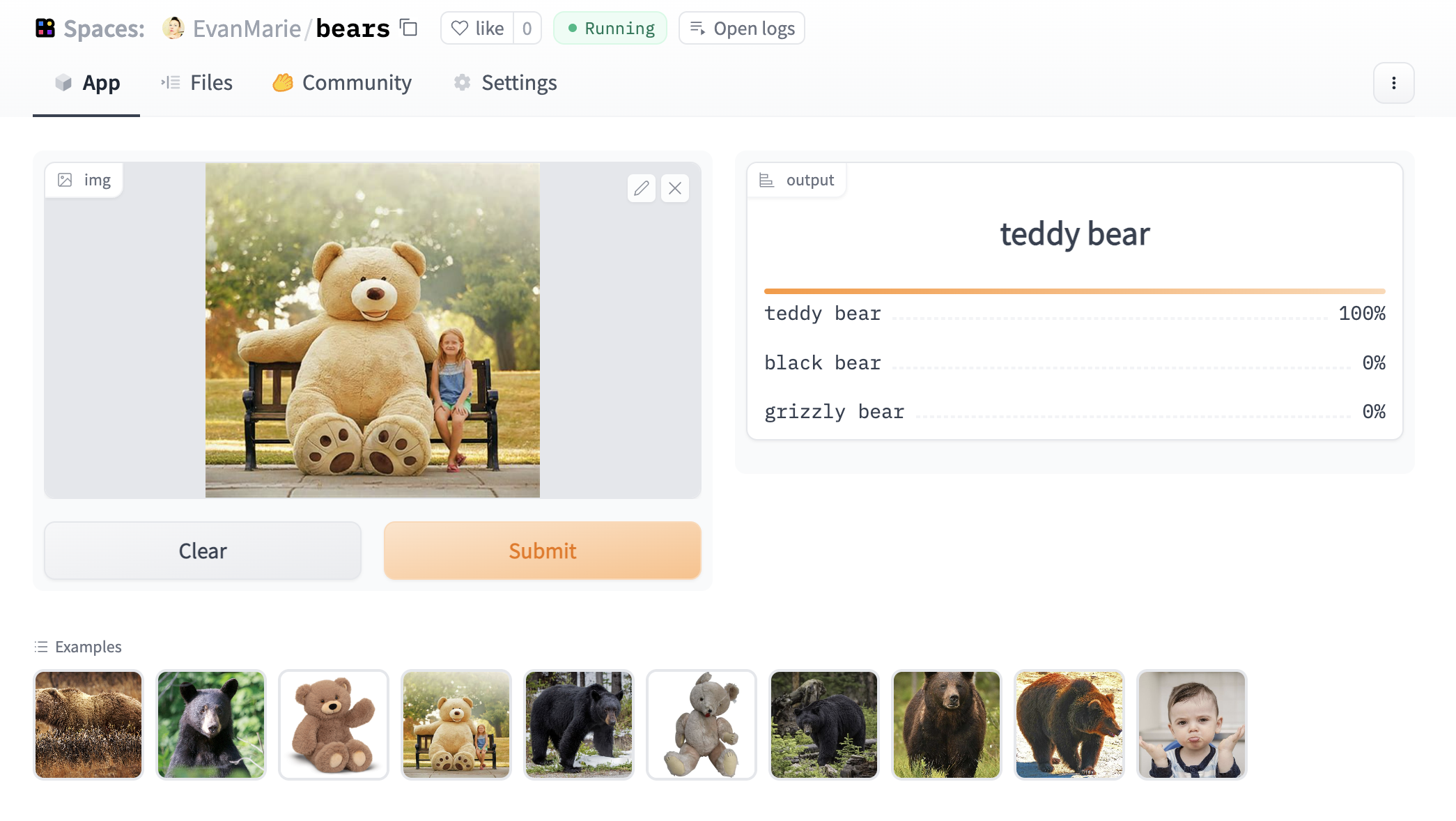

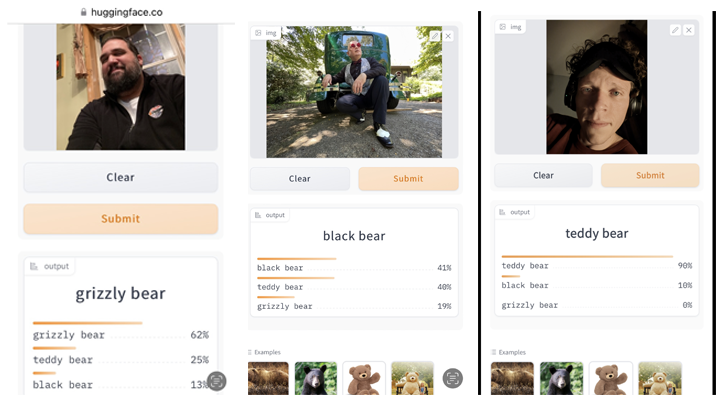

The second project, to which I have gifted the name "Grizzlies and Teddies and Black Bears, OH MY !", with the approximately the same accuracy as the first project, can differentiate between a grizzly bear, a black bear, and a teddy bear. I had a great deal of fun with this one! It was a bit more tricky, now having three classifications rather than two. But the model was unbelievably accurate with just a bit of tweaking at differntiating between black bears and grizzly bears, something I honestly was not always 100% sure of when performing the same task with my human eyes and brain.

And once again, the messages started pouring in. This time, I was not so surprised. Somehow the bear classification of my friends made COMPLETE sense to me!

Grizzlies and Teddies and Black Bears, OH MY! the full code: PDF | Jupyter

The third and fourth projects, I am still working on accuracy, because I decided to dive into human faces after my work with animals. And wow, is that a different ballgame!

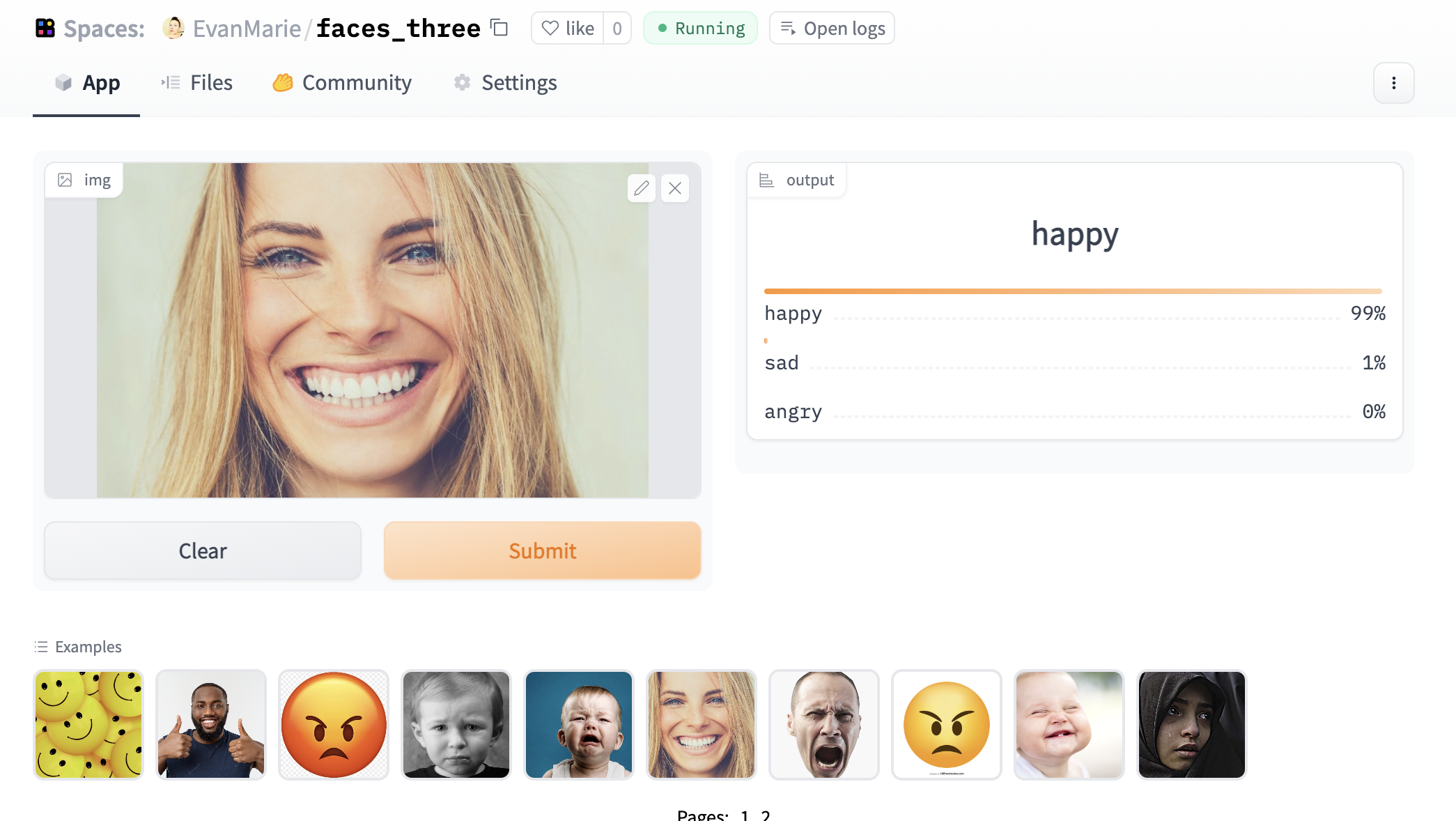

My third little app - Happy, Sad, or Angry - is meant to differentiate between a human with a happy face, versus a sad face, versus an angry face. Detecting a happy face is apparently quite easy, except for babies when they make odd little happy faces. But boy do sadness and anger overlap! I never would have imagined the similarities between a sad and an angry face. But now I can see it. I can see what is confusing the model, and I am working to figure out how to help it see as I see. I currently have it at 78% accuracy, which is actually pretty high I am now realizing. And I would have gotten higher if Google had not kicked me off in the middle of training another model. I will be signing up for more robust GPU service tomorrow. sigh!

And my fourth project is going to build upon the third and throw in surprise and fear! That's gonna be really fun. I am currently focused on using different types of data augmentation to try to help the model see the small differences between such algorithmically similar emotional expressions. I also quadrupled my data and my epochs on training. It is helping. But I am honestly never satisfied until my accuracy is in the high 90s. I go for the GRADE!

Happy, Sad, or Angry? the full code: PDF | Jupyter

Check back for more fun projects soon! I will have much more to show!!